Recent applied research |

|

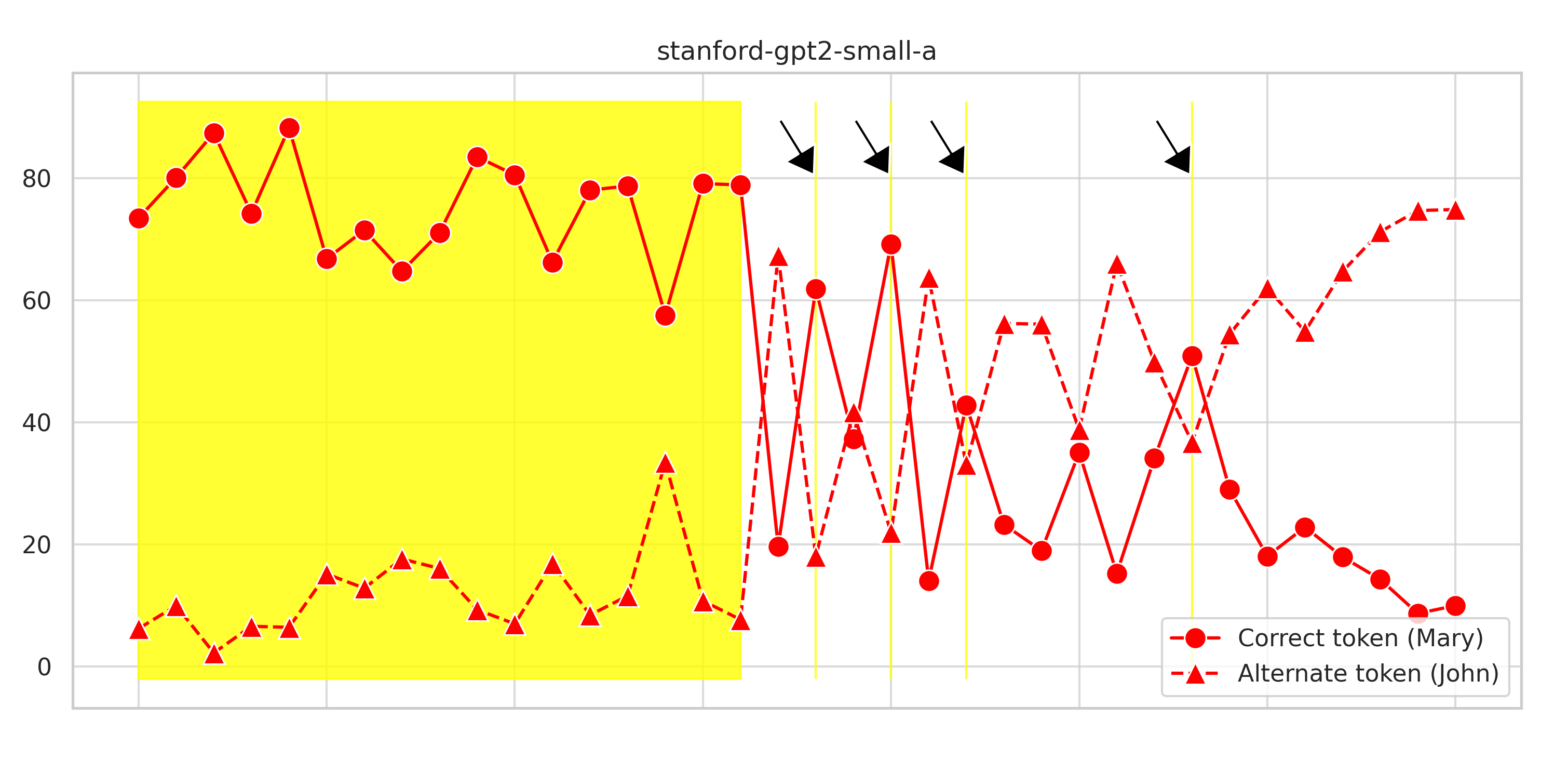

Stability of interpretable circuits across models of varying sizeshowcase (WIP) | repo | brief reportThis repo contains my ongoing experiments to better understand the recent advances in mechanistic interpretability research. |

|

Finetuning open source models under low-resource constraintNeurIPS LLM Efficiency Challenge, 2023challenge | repo | models | data I participated in the LLM efficiency challenge and finetuned performant, open source models using custom open source datasets. |

|

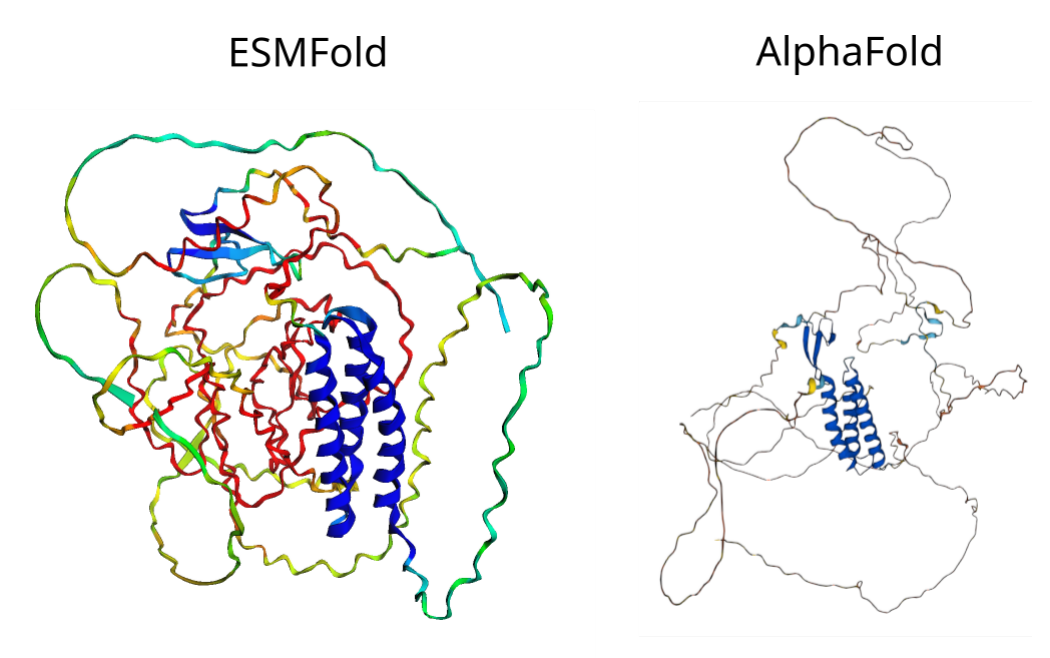

Applying protein language models to predicting disease causing genetic mutations in medically actionable genesOnuralp Soylemez, Pablo CorderoNeurIPS Workshop on Learning Meaningful Representations of Life, 2022 workshop | paper | code We developed a protein language model evaluation framework and revealed unappreciated structural features of proteins that are missed by other structure predictors like AlphaFold. |

|

Fine-tuning large foundational models in cancer biologyPablo Cordero, Onuralp Soylemez, Darren ZhuBio x ML hackathon, 2022 hackathon | code | 5-min summary We ranked 2nd place ($3,000 prize award) with our project on finetuning large language models for single cell biology on smaller datasets like DepMap cancer dependency. |

|

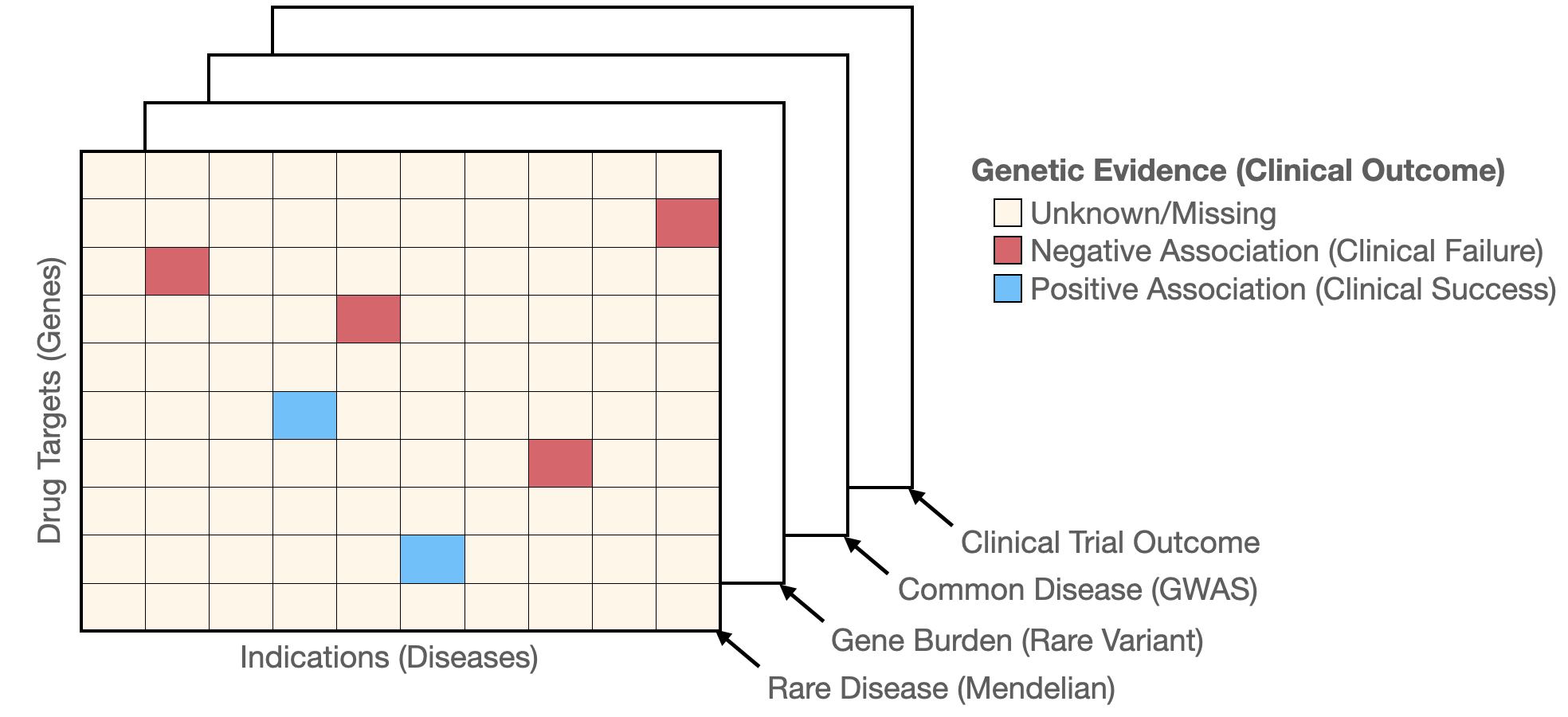

Prioritization of drug targets using Bayesian tensor factorizationICML Workshop on Computational Biology, 2022workshop | paper | code Drug targets with human genetics evidence are shown to have better odds to succeed. We used Bayesian tensor factorization to integrate different types of human genetics evidence from rare genetic diseases to complex disorders. |

|

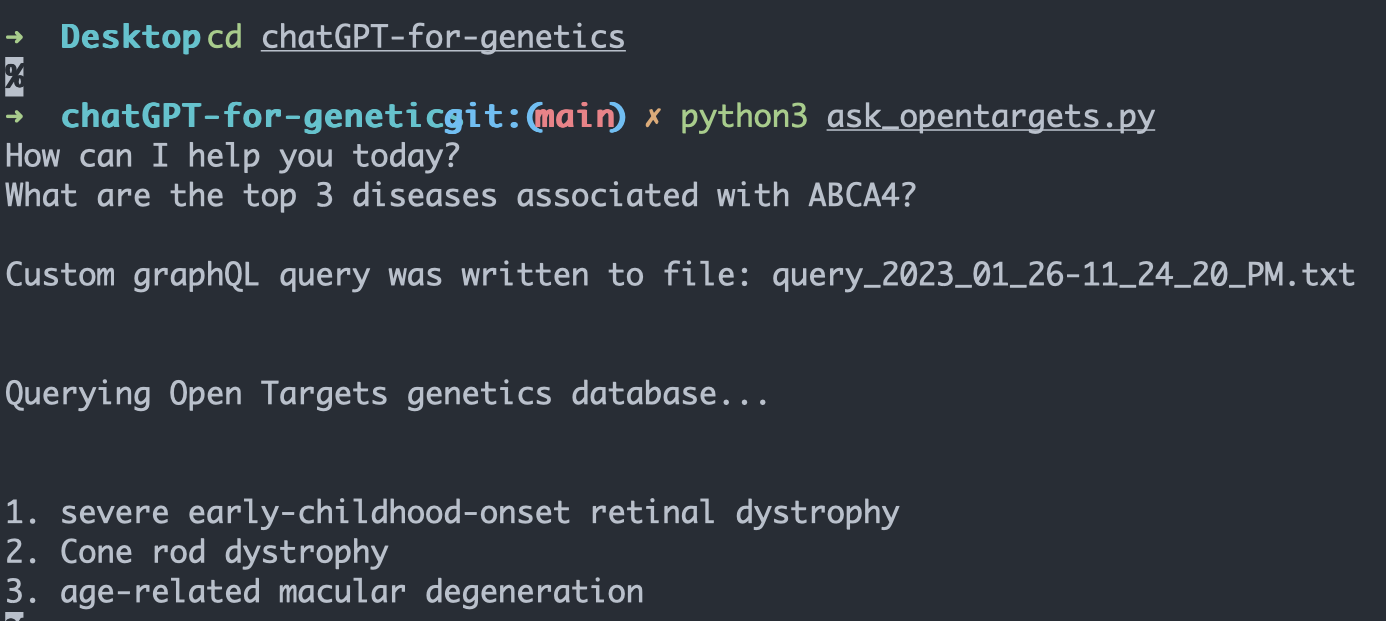

Accelerating scientific discovery process using large language modelsinterview | codeProof-of-concept "chatGPT for genetics". |

|

Design inspired by Chloe Hsu's website. |